2010 update:

Lo, the Web Performance Advent Calendar hath moved

10/2011 update: You can also read the web page with Romanian translation (by Web Geek Science)

Dec 21 This post is part of the 2009 performance advent calendar experiment. Stay tuned for the articles to come.

Perceived page loading time is just as important as the real loading time. And when it comes to user perception, visible indication of progress is always good. The user gets feedback that something is going on (and in the right direction) and feels much better.

Using multiple content flushes allows you to improve both the real and the perceived performance. Let's see how.

The head flush()

While your server is busy stitching the HTML from different sources - web services, database, etc - the browser (and hence the user) just sits and waits. Why don't we make it work and start downloading components we know will be absolutely needed, such as the logo, the sprite, css, javascript... While the server is busy, you can send a part of the HTML, for example the whole <head> of the document. In there you can put the references to external components such as the CSS, which then the browser can head-start downloading while waiting for the whole HTML response.

<html> <head> <title>the page</title> <link rel=".." href="my.css" /> <script src="my.js"></script> </head> flush(); <body> ...

Doing something like this will result in shorter waterfalls, because more downloads can happen in parallel. In the waterfall below the page is not yet completed at 0.4 seconds, yet the browser has already requested more components.

One step further - multiple flushes

While having the browser busy is good and the whole page loads faster, can we do better? How about letting the user see something while the server is still busy? Remember - show something "in a blink of an eye". And how about doing the flushing several times, hence rendering the page is stages. This will help show usable partial versions of the page without waiting on potentially long-loading page or waiting some blocking JavaScripts to load.

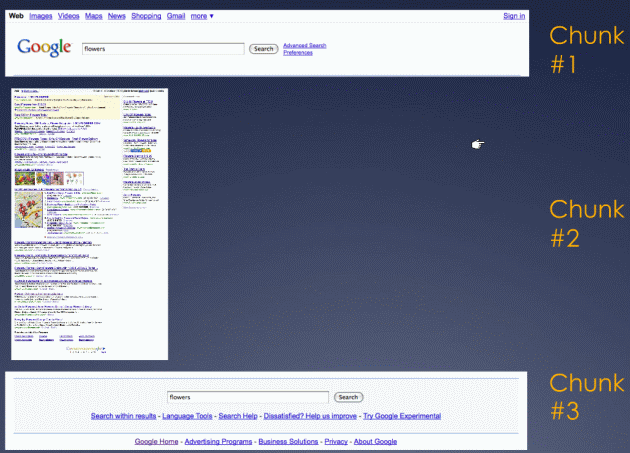

Here's an example - Google search results.

The header part of that page (chunk #1) doesn't need any complicated logic. True, the page title and pre-filling the input box are dynamic parts, but this is just a simple echo of the user input, nothing that requires complex work. So out goes the header. Notice that the number of search results is not visible yet. In this chunk there's the logo, so the sprite is downloaded. If this page was using external CSS, it would be included in the head too.

Then, the search results, the meat of the page. Out it goes, as a static HTML chunk #2.

So far the page is done, but not quite yet. There's some progressive enhancement of the page which requires JavaScript. And JavaScript blocks. So include it in the footer as chunk #3.

The page is usable even without the JavaScript and without the footer. The user mostly cares about the results, so chunk #2 is what matters most. Chunk #3 can even get lost in transfer. As for chunk #1 - it's just to give feedback that "hey, we're working on your query". The first chunk actually tricks the user to believe that the query is already done. "Heck", concludes the user seeing that the page is already coming up, "that was FAST" 🙂

Boring details - HTTP 1.1 chunked encoding

So how does this work actually, how come the HTML is served in parts?

The answer is - HTTP 1.1 chunked encoding. A normal HTTP response looks like:

Headers... [One empty line] <html><body>response...

A chunked response would be like:

Headers... Transfer-Encoding: chunked More headers... size of chunk #1 <html><body>...chunk #1... size of chunk #2 ...chunk #2... size of chunk #3 ...chunk #3 </body><html> 0 (meaning "the end!")

The chunk sizes are given as a hexadecimal. Here's an example response (from the wikipedia article)

HTTP/1.1 200 OK Content-Type: text/plain Transfer-Encoding: chunked 25 This is the data in the first chunk 1C and this is the second one 0

Chunking/flushing strategies

There's basically two paths you can take when it comes to chunking:

- Application-level chunking

- when your web app knows when to flush, based on some logic. The Google search above is an example of application-level flushing - header, body, footer are parts of the page, known to the web application

- Server-level chunking

- when your application doesn't worry about how the content is delivered, but leaves this task to the server. The server can choose some strategy for flushing, for example once every 4K of output. Google search does this when the user agent doesn't support gzip - it flushes out every 4k. Bing.com does it similarly - flushing about every 1k (sometimes 2K, sometimes less) regardless of the user agent's support for gzip. Interestingly enough bing's first chunk is often non-readable characters - just something that tells the browser - hey, I'm alive, here's your first byte

Amazon is an interesting example of doing a mix of both strategies - it looks like sometimes it's server level (e.g. in the middle of an html tag) but sometimes it looks like the chunk contains (or wraps up) a page section. Amazon is also a good example of focusing on what's important on a page (Why is the user here? What do they want? What do we want them to do?) and making sure it's rendered first.

The areas I've marked in this screenshot do not correspond to chunks exactly, but they show how the page renders progressively, using a combination of chunked response and source order:

- #1 - header. Every page has one. Get done with it.

- #2 - buy now. This is what we want the user to do.

- #3 - image. The user wants to see what they're buying. Probably also helps Amazon minimize merchandise returns 🙂

- #4 - title/price. Kind of important too.

The rest of the page - reviews, comments, also buy... all this can wait, it's all secondary. Most of it is way below the fold anyway.

Tools: none

Unfortunately, as far as I know, there's no tool that offers visibility into those chunks - what is the contents of each one and how does it looks like.

Fiddler let's you see the encoded chunked response, but that doesn't help too much. At least it gives you an idea that the response was chunked - you can see under Inspectors/Transformer that there is a "Chunked Transfer-Encoding" checkbox.

Also in Fiddler under the next tab - Headers, you can see the chunked encoding header. And also Fiddler helpfully tells you that the response has been encoded (the yellow message on top)

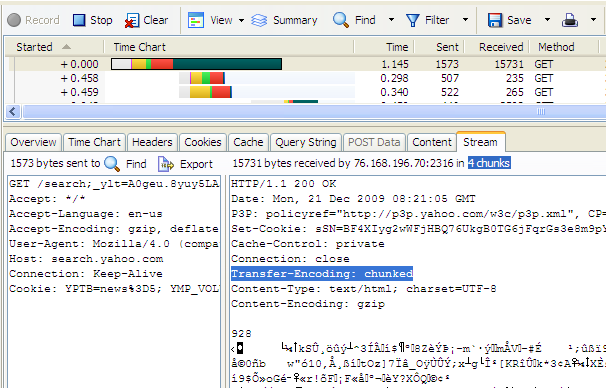

HTTPWatch let's you see the incomprehensible response, but it also tells you the number of chunks. Note that the number includes the last 0 in the response, so when it says 4 chunks it means actually 3.

I also tried to fill the void in the tools department by attempting a Firefox extension. Unfortunately it didn't work, I couldn't find API exposed to extensions that would give me access to the raw encoded response. Looks like it would be possible as an extension to HTTPwatch or Fiddler though - both offer extensibility, both show the raw response.

For own consumption I did a PHP script to request the page and give me the chunks ungzipped. It's very primitive but you can give it a shot here. Test with Yahoo! Search for example.

Works with gzip!

A common concern is whether chunked encoding works together with gzipping the response. Yes, it does. In this presentation Steve Souders sheds some light (PPT, see slide #66) on how to address common issues and also gives flush() equivalents in languages other than PHP.

There's a number of things that can be in the way of successfully implementing chunked encoding+gzip including:

- call ob_flush() in addition to flush() and careful if you have several output buffers started, you may need to iterate and flush all of them

- some browsers may require some minimal amount of content before starting to parse, IE needs at least 256 bytes

- you may need a newer version of Apache

- DeflateBufferSize in Apache may be set too high for your chunk size

- check the the user-contributed comments in the php.net manual for flush() for helpful advice and ideas

- there's ob_implicit_flush() setting which may flush for you instead of you doing flush() every time

Do it!

It may be tricky to implement multiple (or even single) flushing, but it's well worth it. There's some server setup hurdles when it comes to gzip, but once you figure it out, you only do it once. As a reward you get faster loading times, plus progressive rendering so your page not only has faster time-to-onload by feels that way too.